In 2015, the Office of Indigent Defense Services (IDS) asked the School of Government to conduct an online survey of how superior and district court judges view IDS’s administration of indigent defense in North Carolina. Last week, the School issued its report of the survey results, Trial Judges’ Perceptions of North Carolina’s Office of Indigent Defense Services: A Report on Survey Results (March 2016) (referred to below as the Report). The verdict? Judges have a positive view of IDS’s performance, overall and in several key areas, but the results include a few warning signs for indigent defense.

Methodology. Development and implementation of the survey was led by David Brown, director of the School’s Applied Public Policy Initiative. Before coming to the School, David spent eight years with the U.S. Government Accountability Office, the GAO, in Washington, D.C., where he conducted performance audits of major federal programs. The survey he developed was disseminated by email to North Carolina’s trial judges on September 30, 2015, and was open for a little more than one month. To ensure candor, all responses were anonymous. The survey included closed-ended questions—for example, questions asking judges to indicate their satisfaction or dissatisfaction on a 1 to 5 scale (very satisfied, satisfied, neither satisfied nor dissatisfied, dissatisfied, and very dissatisfied)—as well as open-ended questions allowing judges to comment at greater length. By the close of the survey, 54 of the state’s 112 superior court judges and 81 of the state’s 270 district court judges had responded. The Report notes that, as surveys go, the response rate was “relatively robust,” with 48% of superior court judges and 30% of district court judges responding. See Report at p. 31. The survey responses represent a cross-section of judges from around the state with experience with different methods of indigent defense representation. See Report at p. 7 (providing maps showing distribution of responses based on judicial district). The Report cautions generally about drawing conclusions from surveys because it is rare for everyone to respond, but adds that in light of the broad response rate and in the absence of contrary evidence, “it seems reasonable to assume that the views of those judges who did respond are adequately representative of their colleagues as a whole.” See Report at p. 31.

The 36-page Report details the questions presented to the judges and their responses, with useful tables and charts. Below are highlights of some key results.

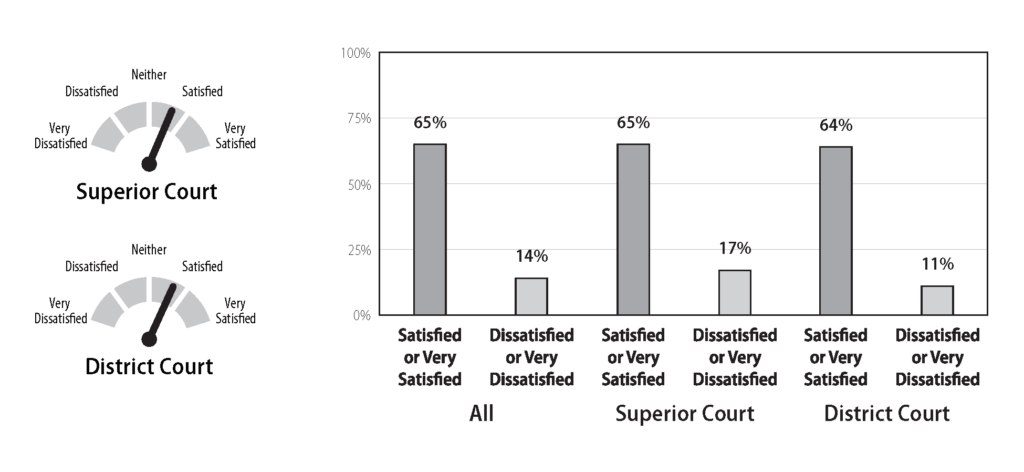

Overall level of satisfaction. Trial judges were asked their overall level of satisfaction with IDS’s administration of indigent representation. In noncapital cases, 65% responded that they were satisfied or very satisfied, 14% responded that they were dissatisfied or very dissatisfied, and the average was closer to satisfied than to neither satisfied nor dissatisfied. See Report at p. 15. The “dial” chart and bar graph from the Report illustrate this result.

The judges’ responses were similar for capital cases, with 58% responding that they were satisfied or very satisfied, 9% responding that they were dissatisfied or very dissatisfied, and the average closer to satisfied than to neither. See Report at p. 14. For capital cases, the survey also asked judges about any difficulties in the scheduling of cases for trial and the timeliness of appointment of counsel. Some judges had concerns, stating that appointments did not happen quickly enough or did not include enough local attorneys. As with other aspects of the administration of indigent defense, the majority of judges responded favorably, indicating that they had not encountered scheduling difficulties and were satisfied or very satisfied with the timeliness of appointments. See Report at pp. 12–13.

The judges’ responses were similar for capital cases, with 58% responding that they were satisfied or very satisfied, 9% responding that they were dissatisfied or very dissatisfied, and the average closer to satisfied than to neither. See Report at p. 14. For capital cases, the survey also asked judges about any difficulties in the scheduling of cases for trial and the timeliness of appointment of counsel. Some judges had concerns, stating that appointments did not happen quickly enough or did not include enough local attorneys. As with other aspects of the administration of indigent defense, the majority of judges responded favorably, indicating that they had not encountered scheduling difficulties and were satisfied or very satisfied with the timeliness of appointments. See Report at pp. 12–13.

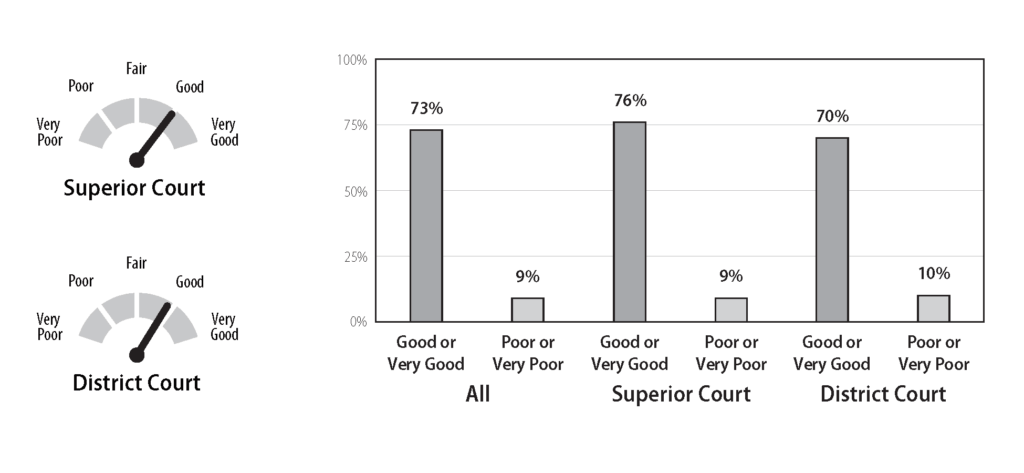

Administration of budget. When asked to rate IDS’s administration of the indigent defense budget, 67% rated IDS’s performance as good or very good and 14% rated it as poor or very poor. See Report at p. 25. When asked more broadly to rate IDS’s provision of constitutionally effective representation given its resources, 73% rated IDS’s performance as good or very good, with 9% rating it as poor or very poor and an overall average of good. See Report at p. 23. The charts below illustrate this result.

Trial judges also were asked about whether they had seen any impact on representation by the reduction in payment rates for private assigned counsel (PAC) in 2011. Here, the judges raised concerns. The Report observes: “By a two-to-one margin, judges responded that they had seen impacts on the quality of representation by PAC that they attribute to pay rate reductions.” See Report at p. 18. The survey asked the 80 judges who responded “yes” to describe the impact of the rate reduction. According to the Report, “of all the open-ended questions in our survey, this one received the most responses,” with 59 of the 66 responding judges indicating that the quality of representation had suffered. See Report at p. 19 (setting out some of the judges’ observations).

Trial judges also were asked about whether they had seen any impact on representation by the reduction in payment rates for private assigned counsel (PAC) in 2011. Here, the judges raised concerns. The Report observes: “By a two-to-one margin, judges responded that they had seen impacts on the quality of representation by PAC that they attribute to pay rate reductions.” See Report at p. 18. The survey asked the 80 judges who responded “yes” to describe the impact of the rate reduction. According to the Report, “of all the open-ended questions in our survey, this one received the most responses,” with 59 of the 66 responding judges indicating that the quality of representation had suffered. See Report at p. 19 (setting out some of the judges’ observations).

Method of representation. The structure of the survey allowed the Report authors to analyze the responses of judges who had experience with different methods of representation—principally, public defender offices and private counsel working under contract with IDS. Overall, judges found that IDS did a better job in providing constitutionally effective representation in districts with public defender offices. They were less satisfied in districts with contract counsel, although the Report observes that none of the results could be described as “negative.” See Report at p. 30 (discussing results and providing table of overall responses). Some differences existed in judges’ views on this issue in capital and noncapital cases. See Report at pp. 28–29. Because of limitations in the data, the Report recognizes that this analysis is necessarily limited. See Report at p. 27.

Day-to-day administration. Another set of questions asked judges to evaluate their interactions with IDS office staff. The questions, which asked for a yes or no answer, elicited the most positive responses in the survey. When asked whether they were satisfied with the timeliness of the IDS staff’s response, 93% of the judges said yes, 7% said no. When asked whether they were satisfied with the substance of the IDS staff’s response, 84% said yes, 16% said no. See Report at pp. 21–23.

Concluding observations. The Report concludes that the judges’ responses to the closed-ended questions (those calling for ratings) “contain many indicators of a healthy system.” See Report at p. 31. Among other things, “the number of positive assessments—‘Satisfied’ or ‘Very Satisfied’ in some questions, ‘Good’ or ‘Very Good’ in others—tended to greatly outnumber the negative ones.” Id.

The Report found that the judges’ responses to open-ended questions were more difficult to categorize, but certain themes emerged. Among other things, judges expressed concerns about management and supervision of indigent defenders and related concerns about their performance. They worried that “defense counsel were too inexperienced, insufficiently available, and unwilling (or unable) to spend sufficient time preparing for cases.” See Report at p. 31. Some judges expressed contrary views, “suggesting that the existence and extent of any problems vary by district.” Id. Some of the “most striking survey results” related to the impact on the quality of representation of the payment rate reductions for assigned counsel. Id.

The above provides a snapshot of the survey results. For those interested in the administration of indigent defense, I encourage you to read the full Report.